Heterogeneous RBCs via Deep Multi-Agent Reinforcement Learning

Federico Gabriele, Aldo Glielmo, Marco Taboga

Abstract

Current macroeconomic models with agent heterogeneity can be broadly divided into two main groups. Heterogeneous-agent general equilibrium (GE) models, such as those based on Heterogeneous Agent New Keynesian (HANK) or Krusell-Smith (KS) approaches, rely on GE and 'rational expectations', somewhat unrealistic assumptions that make the models very computationally cumbersome, which in turn limits the amount of heterogeneity that can be modelled. In contrast, agent-based models (ABMs) can flexibly encompass a large number of arbitrarily heterogeneous agents, but typically require the specification of explicit behavioural rules, which can lead to a lengthy trial-and-error model-development process. To address these limitations, we introduce MARL-BC, a framework that integrates deep multi-agent reinforcement learning (MARL) with real business cycle (RBC) models. We demonstrate that MARL-BC can: (1) recover textbook RBC results when using a single agent; (2) recover the results of the mean-field KS model using a large number of identical agents; and (3) effectively simulate rich heterogeneity among agents, a hard task for traditional GE approaches. Our framework can be thought of as an ABM if used with a variety of heterogeneous interacting agents, and can reproduce GE results in limit cases. As such, it is a step towards a synthesis of these often opposed modelling paradigms.

Extracted equations

- Y_t = A_t * K_t^alpha * L_t^(1-alpha)

- K_t = sum_i k_i_t

- L_t = sum_i ell_i_t

- a_i_t = (1 - delta) * k_i_{t-1} + w_i_t * ell_i_t + r_i_t * k_i_{t-1}

- r_i_t = alpha * A_t * (K_t / L_t)^(alpha - 1) * kappa_i

- w_i_t = (1 - alpha) * A_t * (K_t / L_t)^(-alpha) * lambda_i

- c_i_t = chat_i_t * a_i_t

- k_i_{t+1} = a_i_t - c_i_t

- R_i_t = log(c_i_t) - chi * ell_i_t

- A_t = rho * A_{t-1} + sigma_A * epsilon_t

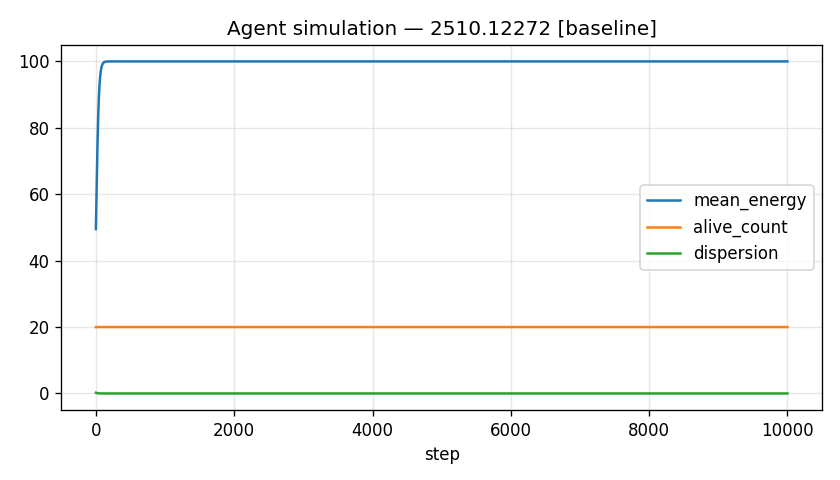

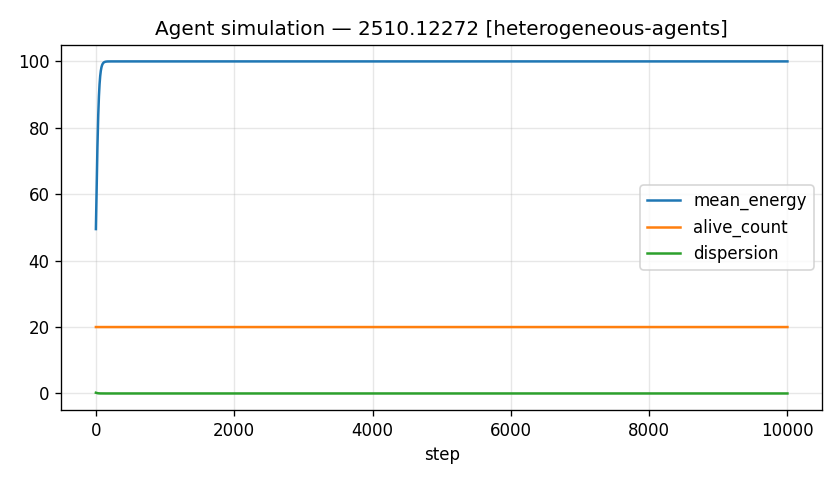

Simulation outputs

Baseline vs. variant

Variant arm: heterogeneous-agents

| Metric | Baseline | Variant | Δ (variant − baseline) |

|---|---|---|---|

| final_mean_energy | 100.0000 | 100.0000 | 0.0000 |

| final_alive_count | 20.0000 | 20.0000 | 0.0000 |

| final_dispersion | 0.0000 | 0.0000 | 0.0000 |

| steps_run | 1.000e+4 | 1.000e+4 | 0.0000 |

Paper claims vs. our run

- MARL-BC recovers textbook RBC results with n=1 agentnot-testableno fidelity score recorded

- MARL-BC converges to mean-field Krusell-Smith equilibrium as n increasesnot-testableno fidelity score recorded

- MARL-BC can simulate rich heterogeneity in agent productivitiesnot-testableno fidelity score recorded

- SAC algorithm achieves stable learning across population sizes n=10 to n=500not-testableno fidelity score recorded

- Aggregate behaviour converges to well-defined equilibrium for large nnot-testableno fidelity score recorded

- Training is computationally feasible on modest hardware (single CPU)not-testableno fidelity score recorded

Parameters

| alpha | 0.33 |

| delta | 0.025 |

| beta | 0.99 |

| chi | 1 |

| rho | 0.95 |

| sigma_A | 0.01 |

| n_agents | 20 |

| kappa_i_range | [0.98,1.02] |

| lambda_i_range | [0.98,1.02] |

Run notes

model_type=generic; ran baseline + heterogeneous-agents